Why Manual Facebook Ads Testing Has Become the Real Bottleneck

Look at any Facebook Ads account running serious spend, and you will find the same pattern: teams claim they test constantly, yet in practice they launch only a handful of new creatives each week. Not because they lack ideas, but because turning one idea into several live ads is slow and tedious.

A typical manual workflow still looks like this:

- Write one concept

- Create two or three variations

- Upload them one at a time

- Wait several days for results

By the time those results arrive, the account has already lost momentum. Meta's algorithm rewards accounts that deliver a consistent stream of new creative signals. Manual workflows produce tests in sporadic bursts.

Meta's family of apps now reaches 3.29 billion daily active people (Meta Q4 2024). The average Facebook ad CTR across all industries is 0.90% according to WordStream's Facebook advertising benchmarks. In that environment, the only durable advantage is the ability to discover better creative faster than competitors. Manual testing pipelines cannot keep pace.

The bottleneck is not targeting. It is not bidding. It is how long it takes for an idea to become a running experiment.

When testing velocity is slow, learning velocity is slow. When learning velocity is slow, scaling becomes a guessing game.

The Pattern That Appears Across Most Ad Accounts

Instead of fixing the testing pipeline, teams typically reach for optimization settings. The results are predictable.

| Symptom | Common Fix | Why It Fails | Better Approach |

|---|---|---|---|

| Few new creatives each week | Increase budget optimization | Budget does not fix weak creative signals | Increase testing throughput |

| Ads fatigue quickly | Duplicate winning ads | Duplicates introduce no new learning | Generate new variations continuously |

| Slow performance insights | Wait longer before decisions | Time does not make tests better | Launch more experiments earlier |

| Media buyers overloaded | Add more campaign rules | Rules do not speed up idea generation | Automate creative experimentation |

Nielsen and Meta research found that creative quality accounts for up to 56% of a campaign's ROAS. Yet most automation investment goes into the optimization layer — managing ads that already exist — rather than the creative generation layer that determines whether those ads perform in the first place.

This is why many automation tools miss the mark. They automate the wrong layer.

What Facebook Ads Test Automation Actually Means in Practice

When people hear "automation," they usually picture rules like:

- Pause ads below a ROAS threshold

- Increase budget after a certain number of conversions

- Adjust bids based on time of day

These are useful, but they are shallow. They manage existing ads. They do not address the testing process.

Real Facebook ads test automation covers three operational layers:

- Creative generation — producing variations at scale from a single brief

- Experiment deployment — launching many tests quickly and without manual errors

- Learning loops — feeding performance insights back into the next round of creative generation

This approach turns ad campaigns into a continuous experimentation system. Meta Blueprint emphasizes creative diversity and regular refresh as foundational practices for sustained campaign performance. Automated testing pipelines are the practical implementation of that.

The real work happens outside Ads Manager. Systems that turn one marketing hypothesis into dozens of live experiments within minutes produce sustained account growth. A single session in Ads Manager does not.

For a deeper dive into how to build a Facebook Ads bulk testing system, check out this detailed guide.

The Automated Testing Pipeline: From One Idea to Dozens of Experiments

The core principle of automated testing is this: ideas become inputs to a pipeline, not individual ads to be managed one at a time.

Take a simple marketing concept: "Promote faster checkout for ecommerce stores."

A manual workflow produces two or three ads based on this idea.

An automated pipeline treats that concept as a starting point and expands it across multiple angles:

- Speed-focused messaging ("Ship orders 40% faster")

- Conversion-rate messaging ("Turn browsers into buyers at checkout")

- Operational efficiency messaging ("Cut checkout abandonment in half")

- Cost-saving messaging ("Reduce cart abandonment costs this quarter")

Each angle then generates variations across three dimensions:

- Headline variants (3 per angle)

- Hook variants (2-3 per angle)

- CTA variants (2 per angle)

One idea quickly becomes 15 to 30 distinct creatives. Advertisers running five or more ad variations per audience see up to 25% lower CPA compared to those running fewer — so the incremental cost of generating additional variations is easily justified by the performance improvement.

Instead of debating which version might work best, the system launches all of them and lets performance data make the call. This increases signal density in the account. The more experiments running simultaneously, the faster you identify which creative patterns, angles, and formats are driving results.

Creating variations is only half the story. The second part is deployment speed.

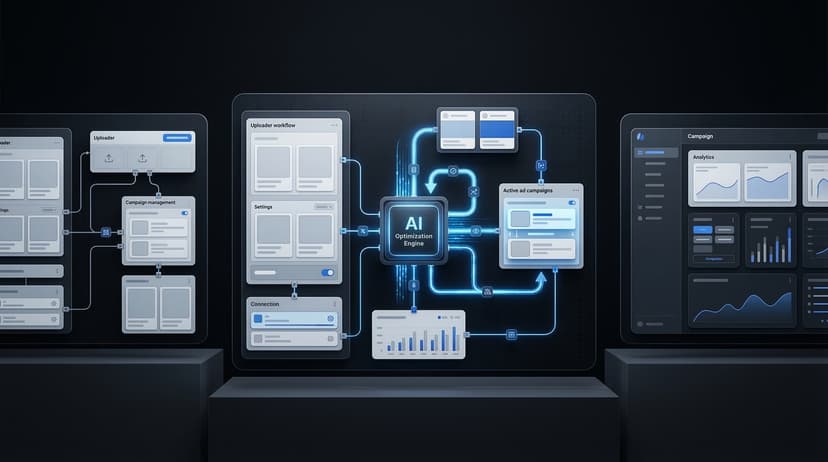

Uploader Workflows: Why the Facebook Ads Uploader Matters

Even when teams generate many creative ideas, they frequently hit a wall at the final step: getting those ads live.

Ads Manager was not designed for high-volume testing. Uploading dozens of variations manually is slow, error-prone, and demoralizing for the people doing it. A media buyer spending three hours on manual uploads is not doing the strategic work that actually moves accounts forward.

This is where a dedicated Facebook ads uploader becomes worth using.

Instead of uploading one ad at a time, teams batch their experiments and deploy them simultaneously. Instrumnt is built around this exact workflow:

- Prepare creative variations in a structured format

- Batch them into test groups organized by hypothesis

- Deploy the entire batch in minutes

This shifts experimentation from a time-consuming chore to a repeatable routine. Teams that batch ad creation instead of building one by one report saving 4 to 6 hours per week per account. At scale, across multiple accounts, that efficiency difference compounds.

As testing volume increases, the algorithm receives richer signals. More experiments running in parallel means faster learning, which means faster identification of winning creative patterns.

AdEspresso has documented this pattern consistently: accounts that maintain higher creative testing velocity see compounding returns as the algorithm has more data to optimize against.

For a more detailed breakdown of this execution model, check out this guide on scaling Meta ads with bulk uploading.

The Continuous Creative Learning Loop

More tests are valuable on their own. The real compounding advantage comes from using performance data to directly inform the next round of creative generation.

That is where AI-driven workflows close the loop.

Rather than relying on brainstorming sessions alone, an automated learning system can:

- Identify top-performing messaging structures from completed tests

- Detect recurring winning hooks and formats

- Surface the audience segments where specific creative patterns over-index

- Generate new variation briefs based on those patterns

Those new variations feed back into the testing pipeline. The cycle looks like this:

- Generate creative variations from a brief

- Launch experiments using a bulk uploader

- Collect performance data over 3 to 7 days

- Use AI to identify patterns and generate new iteration briefs

- Launch the next round of tests

This cycle keeps repeating. Instead of optimizing a handful of ads indefinitely, the account evolves through hundreds of micro-experiments per month.

Creative fatigue is a constant pressure in Facebook ads. For cold audiences, performance typically degrades once ad frequency exceeds 3 to 5 impressions per user. An automated testing pipeline means fresh creative is always entering the rotation before fatigue becomes a performance crisis.

For more on how AI is reshaping Facebook ads optimization, see this detailed guide.

Why Most Automation Tools Still Miss the Point

Many platforms claim to offer AI optimization for Facebook ads. Most focus on improving the performance of existing ads rather than generating new experiments to run.

Revealbot is effective at rule-based automation — pausing underperformers, adjusting budgets based on ROAS thresholds, and scheduling dayparting. Madgicx focuses on optimization insights and AI recommendations for existing campaigns.

Both tools improve efficiency within a fixed set of ads. Neither solves the core problem: they do not expand the number of creative hypotheses being tested.

In Facebook ads today, creative discovery is the largest driver of growth. The accounts that learn fastest — that cycle through the most hypotheses in the shortest time — are the ones that find scalable creative before competitors do.

The most valuable automation accelerates:

- Creative generation and variation

- Experiment deployment volume

- Learning loops that feed back into new creative

That is why modern automation stacks prioritize uploader workflows and AI-assisted creative iteration alongside rule-based optimization engines.

The Real Outcome of Automated Facebook Ads Testing

Once teams adopt automated testing pipelines, several changes happen quickly.

Experiment volume increases. Instead of debating which creative might work, the team tests multiple possibilities simultaneously and lets data resolve the question.

Learning cycles shorten. Rather than waiting weeks for a winning angle to emerge, patterns appear within days because the volume of data is higher.

Media buyers shift roles. They move from campaign operators — spending their days on manual uploads and parameter adjustments — to experimentation strategists designing better hypotheses, interpreting results, and steering the next wave of creative tests.

The system handles the operational work. Human judgment focuses on the decisions that actually require it.

That shift defines where Facebook ads management is heading. Not smarter bid rules. Not more optimization dashboards. Automated experimentation pipelines that turn ideas into dozens of live tests faster than any manual process could.

Implementation Checklist

Use this checklist when setting up an automated Facebook ads testing pipeline:

- Map your current workflow from concept to live ad and identify where time is lost

- Set a weekly creative launch target (start with 20 variations per week, scale to 50)

- Choose a bulk uploader tool that supports batch deployment with error checking

- Establish a naming convention for test campaigns before you scale volume

- Define your test dimensions: headlines, hooks, CTAs, visuals — test one dimension at a time per experiment when possible

- Set minimum data thresholds before making optimization decisions (typically 50-100 conversions per ad)

- Build a structured review cadence — weekly minimum — to pull learnings and brief the next round

- Document winning patterns centrally so new creative briefs can reference them

- Review Meta Blueprint guidelines for creative specifications to avoid upload rejections at scale

Frequently Asked Questions

How do I automate Facebook ads?

Start by identifying the three layers of your current workflow: creative generation, ad deployment, and performance analysis. Automate the most time-intensive layer first, which is usually ad deployment. Use a bulk uploader to replace manual Ads Manager uploads, then layer in AI tools for creative variation generation. Finally, build a structured review process that feeds performance insights back into new creative briefs.

What is an ad testing pipeline?

An ad testing pipeline is the end-to-end system that takes a creative concept and turns it into live experiments running in Facebook Ads Manager. It covers creative brief development, variation generation, naming and organization, bulk upload, QA review, launch, and performance data collection. A well-designed pipeline runs continuously, with new tests entering each week and performance data from previous tests informing what gets built next.

How many ads should I test per week?

A practical target for teams managing meaningful ad spend is 30 to 50 new creative variations per week. That typically translates to 6 to 10 distinct concepts with 3 to 5 variations per concept. Teams just starting to build out a testing workflow should aim for 15 to 20 per week as a foundation. The key is consistency: a steady weekly volume of new tests produces more reliable learning than occasional large batches.

What is the best tool for automated Facebook ads testing?

The right tool depends on which layer of automation you need most. For bulk deployment and launch speed, Instrumnt is built specifically for high-volume creative testing workflows. For post-launch rule automation, Revealbot handles budget and bid rules well. For campaign-level optimization insights, Madgicx provides useful dashboards. Most high-performing teams use a combination: a launch-focused tool for getting ads live quickly and an optimization tool for managing performance after launch.