Most Facebook ads teams optimize. Fewer build learning loops. The difference determines whether a team compounds its performance over time or plateaus after finding its first set of winning ads.

Optimization means improving what you already have: adjusting bids, refining audiences, pausing weak ads, scaling winners. That's necessary work. But optimization is reactive by nature — it responds to existing data within an existing creative set.

A learning loop is different. It treats every ad as a hypothesis, every result as information, and every week as an opportunity to generate better hypotheses than the week before. The loop doesn't just improve current campaigns — it improves the team's ability to build future ones.

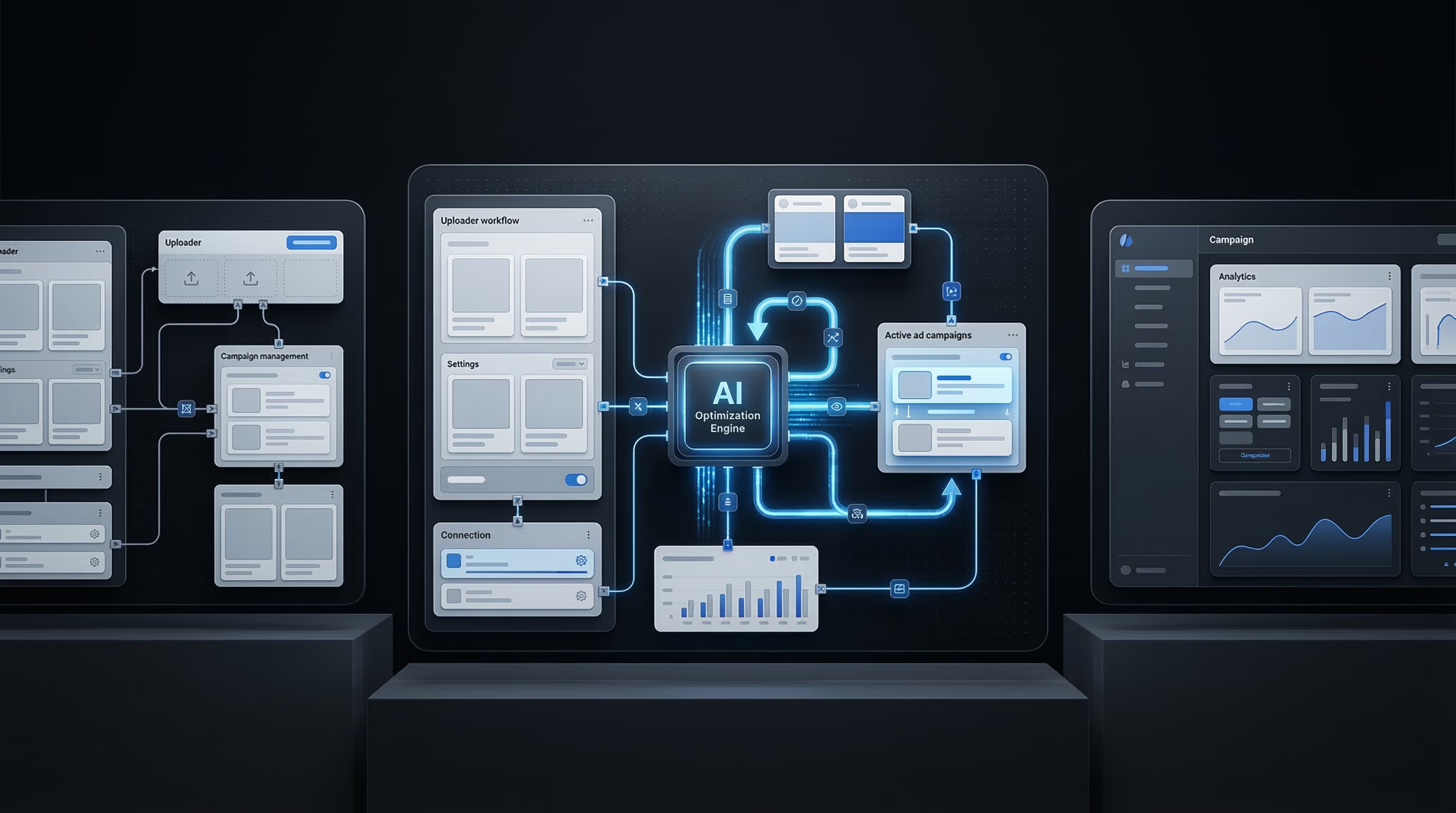

This guide explains what a Facebook ads learning loop is, why manual ad management breaks it, how to design one, and how to use tools like Claude Code and a bulk Facebook ads uploader to keep it running at volume.

What a Learning Loop Is (and Why It's Not Just "Optimization")

Optimization is a point-in-time activity. You look at current performance, make a change, and wait to see if the change helped. Each cycle is relatively self-contained.

A learning loop has a compounding structure. Each cycle generates not only performance improvements but also insights that make the next cycle more effective. The output of one loop becomes the input for the next.

In practice, the loop has four stages:

Launch — Deploy new creative variations against defined audiences. The emphasis is on meaningful variation across angles, not minor copy tweaks.

Measure — Track the right signals: CTR, CPM, conversion rate, frequency, and cost-per-result. The WordStream Facebook advertising benchmarks put average CTR across all industries at 0.90% — knowing where your account sits relative to that baseline tells you whether you have a creative quality problem or a targeting problem.

Ideate — Use performance data to generate new hypotheses. Which angle outperformed? Which audience was most receptive? What objections appeared in comments? The data should produce a briefing, not just a pause/scale decision.

Repeat — Deploy the next generation of creative, informed by what you learned. The new batch should be meaningfully different from the last, not a rehash of the same concepts with different images.

The loop only compounds if all four stages run consistently. Skip measurement, and the ideation stage has no foundation. Skip ideation, and the next launch is just a guess. Skip the repeat, and you have optimization, not learning.

Why Manual Ad Management Breaks the Learning Cycle

Manual Facebook ad management introduces friction at every stage of the loop, and friction slows the cycle.

At the launch stage, building ads one at a time in Meta Ads Manager takes 15–30 minutes per ad. A team that launches 5 ads per week is not running a learning loop — it's running too few experiments to generate statistically meaningful signals. You need 10–20+ meaningful variations per audience to learn at a useful rate.

At the measurement stage, manual analysis — pulling reports, building pivot tables, comparing performance across campaigns by hand — introduces delay and inconsistency. Teams that analyze weekly are a week behind the data.

At the ideation stage, manual briefing processes add more delay. A creative brief that takes two days to write and approve is two days before the next launch can even begin.

The cumulative effect: a manual team might run one full loop cycle every two to three weeks. A team with the right infrastructure can run a full cycle every week. Over six months, the difference is 8–10 loop cycles vs. 20–26. That's not a small gap — it's a compounding advantage.

Creative quality accounts for up to 56% of ROAS variation, according to Nielsen and Meta's joint research. That statistic reframes the stakes. The main driver of advertising ROI is not bid strategy or targeting — it's the quality and freshness of creative. A learning loop is the mechanism that improves creative quality systematically.

The Three Components of a Functional Learning Loop

A Facebook ads learning loop needs three components working together.

1. A launch infrastructure that supports volume

You cannot run a learning loop with 3 ads per week. The Meta delivery system needs enough creative variation to optimize against, and you need enough data points to distinguish signal from noise. This requires a bulk upload capability — a system that takes a structured creative dataset and deploys it to the Meta API without requiring manual builds in Ads Manager.

Instrumnt provides this. A batch of 20 new ad variations that would take 6–8 hours to build manually can be deployed in under an hour through a structured upload.

2. A measurement system that tracks the right signals

Not all metrics contribute equally to the learning loop. The most important signals are:

- CTR trend over the first 7 days (indicates creative resonance with the target audience)

- Cost-per-result against your target CPA (indicates whether the angle converts, not just engages)

- Frequency relative to audience size (signals when creative fatigue is approaching — cold audiences typically begin to fatigue at frequency 3.5+)

- Creative performance by concept family, not just by individual ad (identifies which underlying angle is driving results, not just which execution)

The Meta for Business Help Center documents Meta's ad relevance diagnostics — quality ranking, engagement rate ranking, and conversion rate ranking — which provide a structural view of how individual ads perform relative to ads competing for the same audience. These rankings are leading indicators that belong in any serious measurement system.

3. A systematic ideation process

Performance data should produce a specific brief for the next creative cycle — not a vague instruction to "try something different." A good learning loop brief answers these questions:

- Which angle family outperformed? Why might that be?

- Which audience segment responded most strongly?

- What did underperforming ads have in common?

- What angle hasn't been tested yet?

- Are there objections appearing in comments or engagement that suggest a new message approach?

Claude Code is useful here as a structured ideation tool. Feed it the performance data, the winning and losing angles, and the audience context, and ask for a prioritized list of new hypotheses. This turns data analysis into a systematic creative brief in minutes rather than hours.

How to Design a Feedback System

A feedback system translates raw performance data into actionable creative direction. Here's how to build one.

Step 1: Define your measurement window

Most meaningful signals emerge within the first 7 days for cold audiences. Set a consistent window — 7 days from launch — as your primary evaluation period. Don't optimize before then; let the Meta algorithm run.

Step 2: Build a concept-level reporting view

Individual ad performance data is noisy. Group ads by concept family and look at aggregate performance. If five ads all used the "fear of long-term damage" angle and collectively outperformed the five ads using "productivity benefits," the signal is clear: the damage angle resonates with this audience. That's what the next brief should develop.

Step 3: Create a structured performance export

Export weekly performance data in a consistent format: angle, hook type, visual approach, CTR, CPM, conversion rate, frequency. This creates a growing dataset that reveals patterns over time — patterns that become your most valuable creative intelligence.

Step 4: Apply the ideation framework

Use the structured export to answer the briefing questions above. This is where Claude Code accelerates the process: it can analyze the export, identify patterns, and generate new angle hypotheses faster than manual analysis allows.

Step 5: Deploy the next cycle

Push the new creative batch through Instrumnt. Maintain the same audience parameters where possible, so you're testing creative variation, not creative plus targeting variation simultaneously.

Using AI Tools to Close the Loop Faster

The slowest stage of most learning loops is the transition from measurement to ideation. Data comes in. Someone needs to analyze it, draw conclusions, draft a brief, get it reviewed, and hand it to the creative team. That process can easily take a week.

Claude Code is an AI coding assistant that media buyers use to build automated scripts for ad generation and analysis. In a learning loop context, it serves two functions:

Analysis acceleration: Feed Claude Code a structured performance export and a prompt asking for pattern identification. It can identify which angles outperformed, which fell below account baseline, and which combinations of hook type and visual approach showed the most consistency. That analysis, done manually, might take two to three hours. Done with Claude Code, it takes minutes.

Brief generation: Once the pattern analysis is complete, Claude Code can generate a structured creative brief — including specific angle hypotheses, suggested hooks, and a prioritized testing plan — based on the data. The brief still requires human review and editorial judgment, but the generation step is automated.

Meta Blueprint covers the technical mechanics of how Meta's learning phase works and what disrupts it. Understanding the algorithm's behavior — specifically, what constitutes a "learning phase reset" and why it matters — helps design loop cycles that don't unnecessarily restart the algorithm's optimization process.

The Role of a Facebook Ads Uploader in Keeping the Loop Running at Volume

A learning loop that generates 5 new creative variations per week is useful. One that generates 20 per week is transformative. The difference is entirely a function of deployment infrastructure.

Manual ad building is the binding constraint. Even a well-organized creative team that produces excellent variation concepts will be limited by how fast those concepts can be translated into live ads. If each ad takes 20 minutes to build manually, and the team has 4 hours per week for deployment, the ceiling is 12 ads per week — and that's before accounting for QA, review cycles, and naming cleanup.

A bulk uploader removes that ceiling. With Instrumnt, the same team can deploy 50+ ads in the time it would take to manually build 10. The creative variations are structured in a spreadsheet, validated before upload, and pushed to the Meta API in bulk. Naming conventions are applied uniformly. UTM parameters are consistent. The QA step happens before launch, not during.

This infrastructure change directly enables a faster learning loop. More experiments per cycle mean more data. More data means faster pattern identification. Faster pattern identification means better briefs for the next cycle. Over months, this compounds into a meaningful competitive advantage.

Teams that refresh creatives every 7–14 days maintain CPMs 15–25% lower than those that let ads run stale. The bulk uploader is what makes that refresh cadence operationally feasible without burning out the team.

For a detailed walkthrough of how to structure the bulk deployment workflow, see How to Build a Facebook Ads Bulk Testing System with Instrumnt and Claude Code.

How to Prevent Creative Fatigue Inside the Loop

A learning loop that doesn't account for creative fatigue will eventually optimize itself into a corner. The best-performing angles from week 3 will be fatigued by week 8. Without a fatigue management mechanism built into the loop, the team will be scaling spend into declining assets.

The integration is straightforward: fatigue signals should be a standard part of the measurement stage. Track frequency, CTR trend, and CPM movement for every active creative set. When a cold-audience ad hits frequency 3.5 or CTR drops 20%+ from its first-week baseline, it enters a refresh queue rather than continuing to run.

The ideation stage should always produce two types of briefs: net-new angle exploration and refresh variants for fatigued concepts. A purely net-new approach ignores the evidence base from proven angles; a purely refresh approach runs out of fresh angles eventually. Both types belong in every cycle.

For a complete guide to the fatigue detection and refresh workflow, see Facebook Ads Creative Fatigue Detection.

What a Mature Learning Loop Looks Like

A team that has been running a consistent learning loop for 90 days will typically see:

- A documented library of proven angles, ranked by performance across audiences

- A clear map of which psychological hooks resonate with which audience segments

- Predictable CPM ranges by angle type and audience temperature, because the system now has enough data to set reasonable expectations

- A faster briefing process, because each new cycle builds on the pattern database from previous cycles

- Lower CPA on prospecting campaigns, reflecting better creative targeting — advertisers running 3+ ad variations per audience see up to 30% lower CPA as a baseline

The 90-day mark is roughly when the loop transitions from "experiment mode" to "compound mode." Prior to that, each cycle is primarily learning. After that, each cycle increasingly refines and extends a growing evidence base.

The teams that reach compound mode are the ones that held the cadence consistently, even in weeks where no obvious winners emerged. The learning loop doesn't only produce wins — it also definitively eliminates losing approaches, which is equally valuable.

Common Loop Design Mistakes

Testing too few variations: Five ads is not enough to learn from. You need enough variation to distinguish angle performance from execution performance, and enough volume for Meta's delivery system to find the right audience segments. Aim for 10–20 meaningful variations per cycle minimum.

Measuring too early: Meta ads need time to exit the learning phase. Evaluating performance at 48 hours produces noise, not signal. A 7-day minimum measurement window is standard for most campaign objectives.

Optimizing angle-level performance based on ad-level data: One ad performing well doesn't mean the angle is working. One ad performing poorly doesn't mean the angle is wrong. Group ads by concept family before drawing conclusions.

Treating the loop as a campaign structure: The learning loop is an operational discipline, not a campaign type. It runs across all your Meta advertising activity, not inside a specific campaign.

Letting the ideation stage drift into guessing: If the brief for the next cycle isn't grounded in specific data from the previous cycle, you're not running a learning loop — you're running a random creative testing program. The data has to feed the brief explicitly.

FAQ: Facebook Ads Learning Loops

What is a Facebook ads learning loop?

A Facebook ads learning loop is a structured four-stage cycle: launch creative variations, measure performance signals, use those signals to ideate better hypotheses, and repeat. Unlike simple optimization, which improves existing ads, a learning loop systematically improves the team's ability to generate effective ads over time. It compounds: each cycle produces better inputs for the next.

How long does it take for Facebook ads to learn?

Meta's "learning phase" — the period during which the delivery algorithm optimizes your ad's delivery — typically requires approximately 50 optimization events (conversions, clicks, or other specified outcomes depending on your objective). For most campaigns, this takes 7–14 days. Significant changes to budget, targeting, creative, or bid strategy restart the learning phase, which is why bulk creative testing should be structured to avoid unnecessary resets of active campaigns.

How does the Meta algorithm learn from my ads?

Meta's delivery system uses machine learning to predict which users are most likely to perform your target action. It learns by observing engagement and conversion patterns: which users clicked, which converted, which gave negative feedback. Over time, it refines the delivery to favor users who match the profile of past converters. Creative fatigue disrupts this process by degrading the engagement signals that the algorithm depends on.

How is a learning loop different from A/B testing?

A/B testing is a specific tactic within a learning loop. A/B testing compares two variations to determine which performs better. A learning loop is an ongoing operational system that uses A/B test results — and other performance signals — to generate progressively better hypotheses over multiple cycles. A/B testing produces a winner; a learning loop produces compound improvement.

How often should I run through a loop cycle?

Weekly cycles are the standard for high-performing teams. Monthly cycles are too slow — they don't generate enough data to compound meaningfully. Teams that run weekly cycles and refresh creatives every 7–14 days maintain CPMs 15–25% lower than slower teams and accumulate significantly more creative intelligence over a quarter.

For the operational foundation this workflow depends on, see How to Scale Meta Ads with Bulk Uploading and 5 tips for media buyers to work faster and scale smarter. For the fatigue management system that runs inside the loop, see Facebook Ads Creative Fatigue Detection.

Ready to run your first loop cycle? Explore Instrumnt's features and pricing.