The Scenario: A Founder Trying to Reverse-Engineer Competitor Ads

On a Thursday afternoon, Daniel—the founder of a B2B SaaS startup—had three tabs open: Meta Ads Manager, a spreadsheet labeled “Competitor Ads,” and a browser window filled with saved ads from the Meta Ad Library.

For the past five days, he’d been focused on one question: How do I find the Facebook ads my competitors are running—and, more importantly, how can I use this information to improve my own campaigns?

He wasn’t just guessing. He had a structured process:

- Searched competitors in the Ad Library

- Categorized ads by hook, format, and CTA

- Logged landing pages and offers

It seemed like a solid strategy.

But nothing changed.

CPA stayed flat. CTR hovered around 0.90% (WordStream 2024 benchmarks). And even though he had a clearer picture of what his competitors were doing, his own Facebook ads weren’t getting any better.

That’s when he had to ask himself: What’s the point of competitor research?

The Dead End: Why Ad Discovery Didn’t Improve CPA

Daniel’s workflow wasn’t unconventional. It’s what most guides recommend: Use the Meta Ads Guide to understand formats, browse competitor ads, save examples, and try to replicate what works.

The problem wasn’t the effort—it was the execution.

Every competitor ad he found came with its own context:

- Different brand reputation

- Different audience

- Different creative history

What appeared to be a winning ad was likely the result of dozens, if not hundreds, of tests.

By simply copying the end result, Daniel missed the process that made those ads successful.

Worse still, analyzing ads gave him a false sense of progress. He felt like he was moving forward, but in reality, he wasn’t running any new tests himself.

This is where most teams hit a wall.

They treat competitor ads as answers instead of inputs.

As we covered in Why the Facebook Ad Library Won’t Help You Find Winning Ads (And What Will), observation without execution doesn’t move the needle.

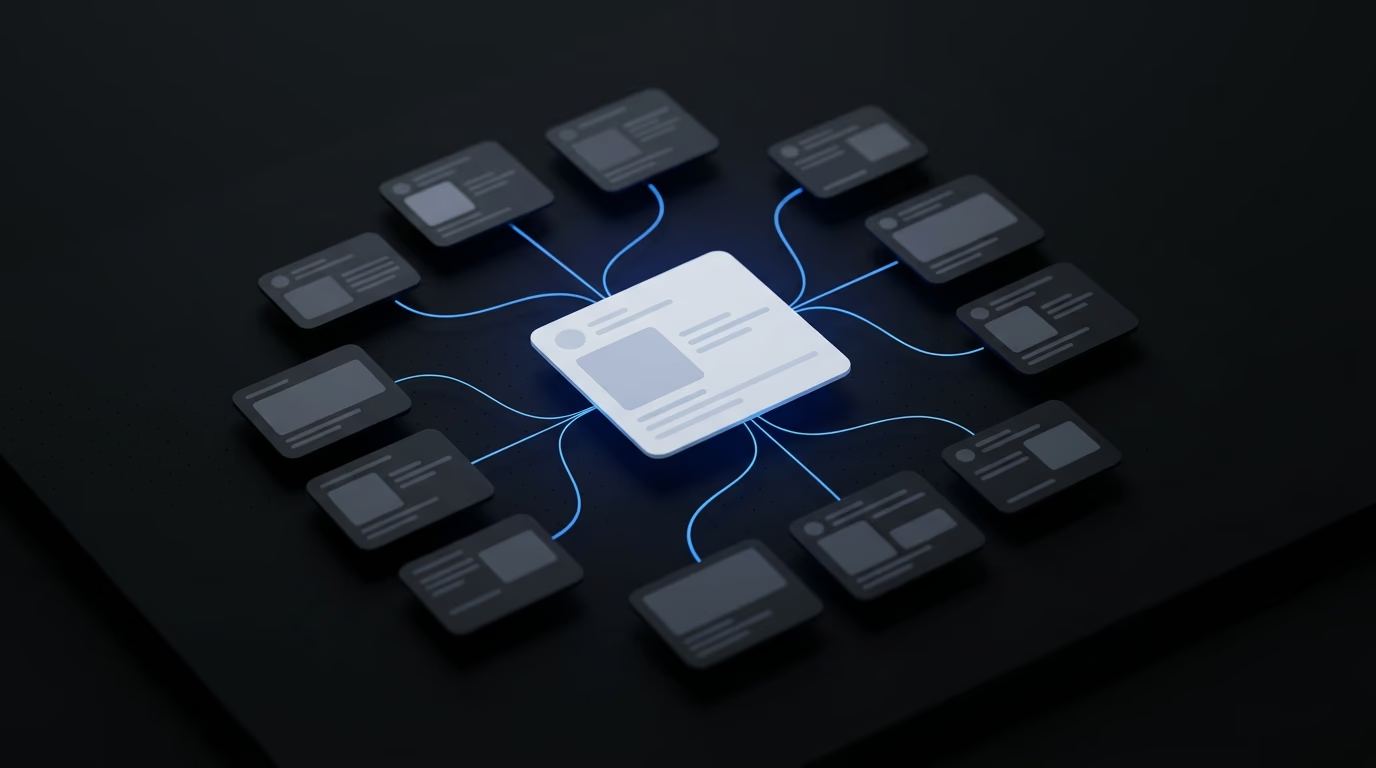

The Insight: Competitor Ads Are Inputs, Not Answers

The turning point came during a weekly review when Daniel noticed something simple.

His team had tested just six ads in the past two weeks.

Meanwhile, competitors were probably testing dozens.

That’s when he had an insight.

Instead of asking:

“How do we replicate this ad?”

The team started asking:

“How do we turn this one ad into 10 tests?”

This shift was critical, especially when you consider how Facebook ads work in 2026:

- Only 5–10% of creatives actually win (industry testing data)

- Advertisers running 3+ variations per audience see up to 30% lower CPA (Meta data)

- Creative quality drives up to 56% of ROAS variation (Nielsen and Meta research)

The takeaway: Volume and iteration matter more than perfection.

Competitor ads are helpful—but only if you use them as raw material for generating variations.

If you don’t increase testing volume, insights alone won’t move the needle.

Mini Example: Expanding One Competitor Ad Into 10 Test Variants

Daniel picked one competitor ad that appeared repeatedly in the Meta Ad Library.

It was a simple video with a hook:

“Still managing your ads manually?”

Instead of copying it directly, the team broke it into components:

- Problem framing

- Audience (media buyers)

- Format (short video)

- CTA (book a demo)

Then they expanded each component.

Hook variations:

- “Your ad account isn’t scaling—and it’s not your budget”

- “Most teams waste hours inside Ads Manager every week”

- “Manual ad testing is killing your growth”

Angle variations:

- Speed (launch faster)

- Volume (test more ads)

- Efficiency (reduce wasted spend)

Format variations:

- Static image

- Carousel

- Short-form video

In just a few hours, one competitor ad had turned into 10 distinct testable ideas.

Not final ads—testable variants.

That’s the key.

As discussed in Why Your Creative Testing Is Failing (And How to Automate the Solution), teams often spend too much time refining a handful of ads, but don’t test enough variations.

Daniel’s team did the opposite—they lowered the bar for each individual ad, but raised the number of tests.

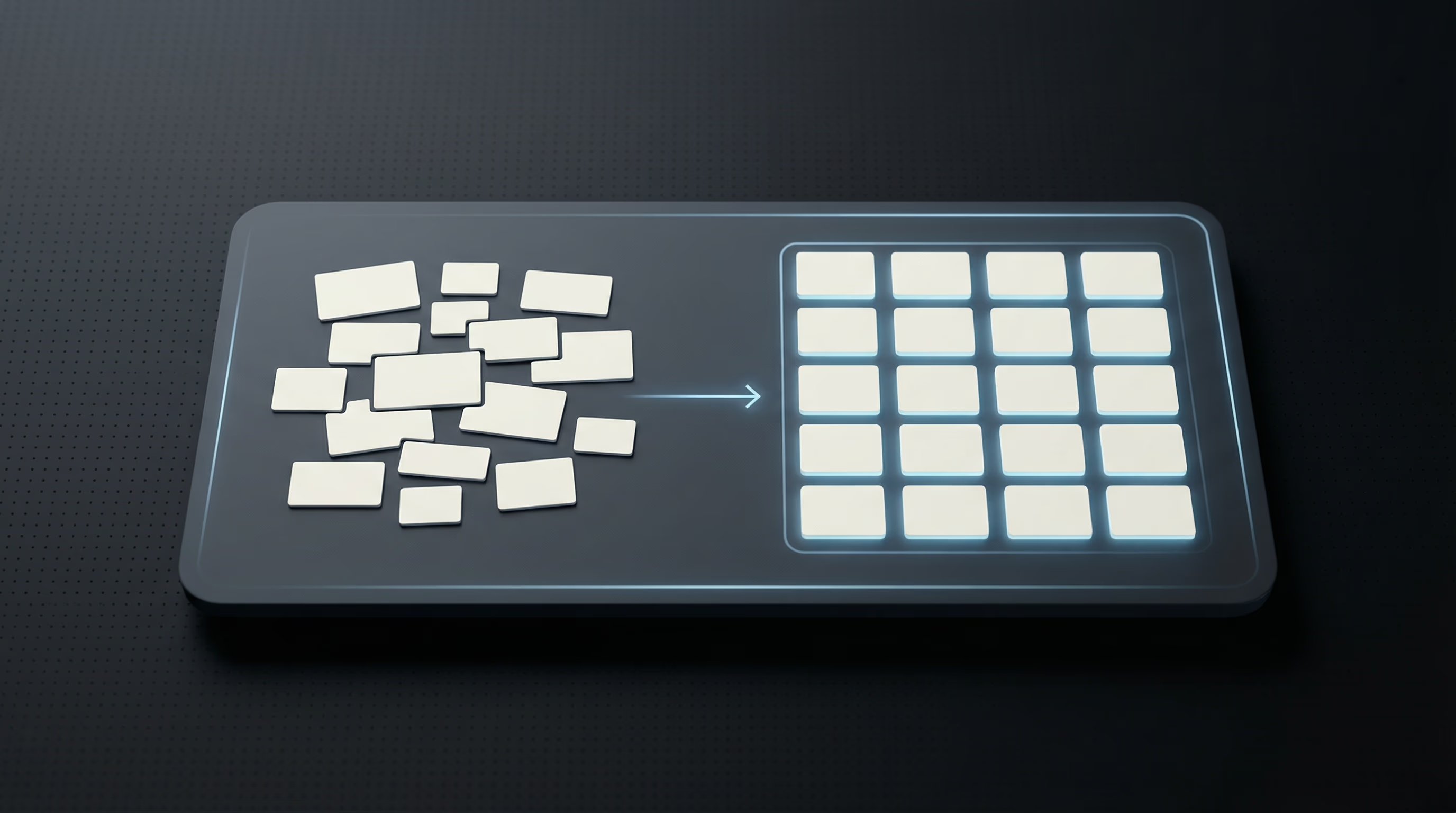

Uploader Workflow: Deploying Variants at Speed With Instrumnt

Here came the next bottleneck.

Creating 10 ideas was easy.

Deploying them in Ads Manager? Not so much.

Manually building ads takes 15–30 minutes per ad on average. At that rate, even a modest testing plan becomes overwhelming.

That’s when the team adopted a Facebook ads uploader workflow using Instrumnt.

Instead of building ads one at a time, they:

- Structured all variations in a spreadsheet

- Used AI to generate copy variations

- Mapped creatives to each variation

- Uploaded everything in bulk using Instrumnt

The results were immediate.

Bulk upload tools can reduce ad creation time by 80–90% (AdManage.ai 2026 data). What used to take half a day now took under 30 minutes.

But the real impact was in how the team’s mindset shifted.

They stopped asking:

“Is this ad ready?”

And started asking:

“How many variations can we launch this week?”

For teams looking into platforms like AdEspresso, Revealbot, or Madgicx, the difference is clear: most tools focus on optimizing management, not creative throughput.

Instrumnt’s value lay in speed and execution.

For a deeper breakdown of this workflow, check out How to Build a Facebook Ads Bulk Testing System with Instrumnt and Claude Code.

What Changed After the Workflow Shift

Within three weeks, things had changed—not because of a single winning ad, but due to consistent testing velocity.

| Metric | Before | After |

|---|---|---|

| Ads launched per week | 3–6 | 20–30 |

| Time per ad creation | 20 min | ~2–3 min |

| CTR | 0.8–1.0% | 1.2–1.6% |

| CPA trend | Flat | Decreasing |

| Learning cycles per month | 1–2 | 4–6 |

The improvement didn’t come from a breakthrough ad.

It came from faster feedback loops.

More tests meant more data. More data meant clearer signals. And clearer signals meant better decisions.

From Observation to System: What Most Teams Miss

Daniel set out to learn how to find competitor ads on Facebook.

He succeeded.

But the real advantage came when he shifted his approach:

- Turning one ad into multiple testable hypotheses

- Using AI to quickly generate new variations

- Deploying ads at scale with a Facebook ads uploader

- Measuring outcomes, not formats

This is where most teams fall short.

They focus heavily on discovery but neglect execution.

Even platforms like Smartly.io tend to focus on workflow management, but without a system for generating and testing ideas, throughput still stalls.

The real system looks like this:

Inputs (competitor ads) → Expansion (AI + variation) → Deployment (bulk upload) → Feedback (performance data)

It’s the feedback loop—not the discovery—that drives results.

Conclusion: Turning Observation Into Performance Gains

Competitor research isn’t useless.

It’s just incomplete.

If your workflow ends with “we understand what competitors are doing,” you’re stopping too early.

The teams that win treat competitor ads as starting points—not final solutions.

They don’t try to copy success. They try to out-test it.

And in an ecosystem where Meta reaches 3.29 billion daily active users (Meta Q4 2024 earnings), the speed of testing determines the winners—not the depth of analysis.

FAQ

Can I legally use competitor Facebook ads for inspiration?

Yes. It’s common to draw inspiration from competitor ads as long as you avoid copying copyrighted elements or misusing branding. Focus on adapting ideas, not duplicating them. For more details, check out Meta Advertising Standards.

What tools help automate ad variant creation from competitor ads?

AI-driven tools for copy generation, combined with bulk-upload platforms like Instrumnt, make it easy to turn one ad idea into many variations quickly.

How do I measure whether competitor-inspired testing improves my CPA?

Track performance across multiple variations, not just individual ads. Look at trends in CPA, CTR,